Alltold

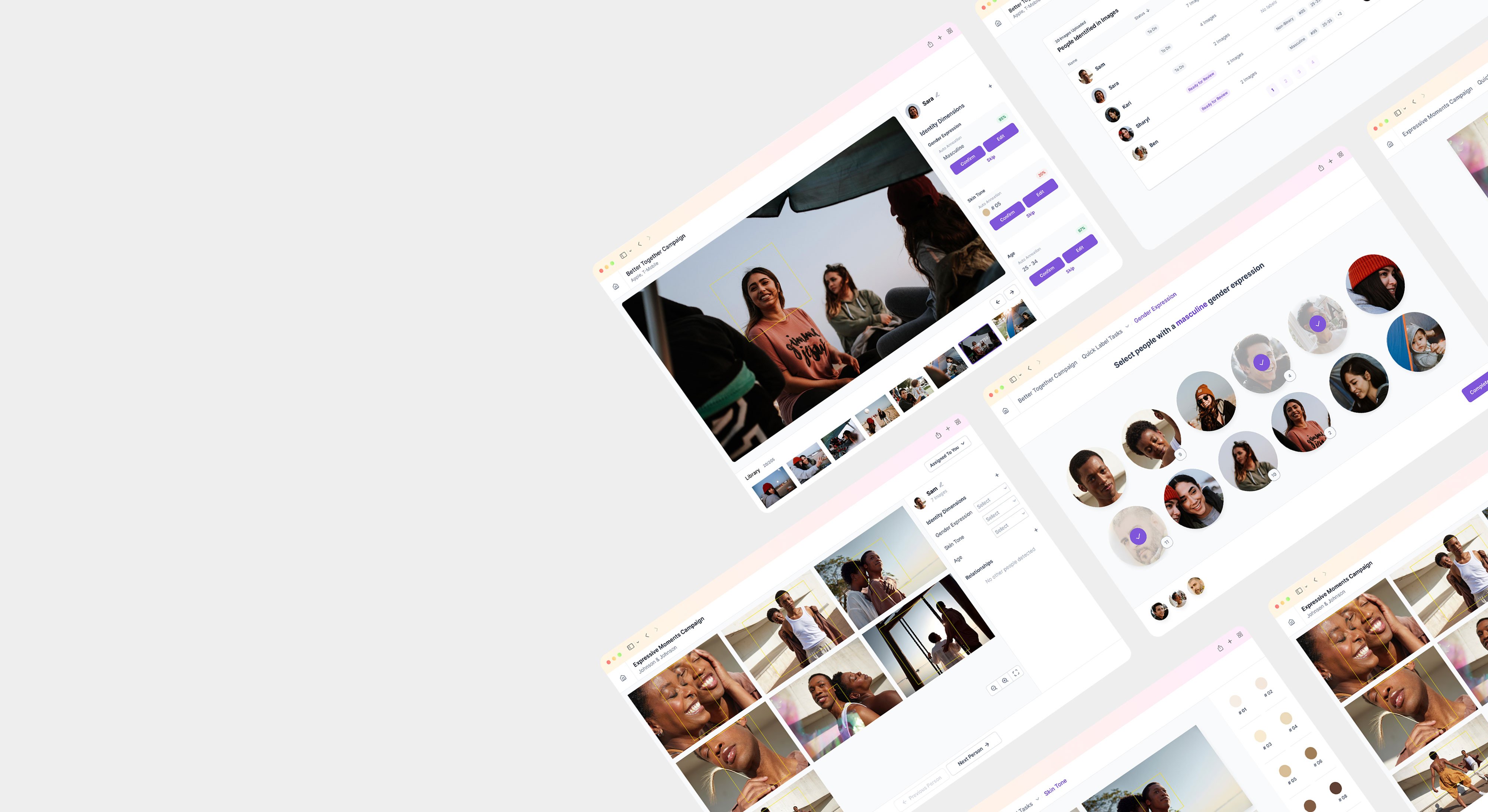

Developing an AI annotation tool to achieve inclusive media representation

Companies talk loud about increasing representation in their media. But especially for those with a massive catalog of content, tracking progress can be tricky.

Alltold came to us with a brilliant idea: they wanted to create an AI-enhanced annotation tool to accelerate the shift to more inclusive and inspiring representation of all people in all media. However, as a new startup they didn't have a user experience expert on their team and were looking for expert guidance to help define an engaging and effective product experience. We were happy to help.

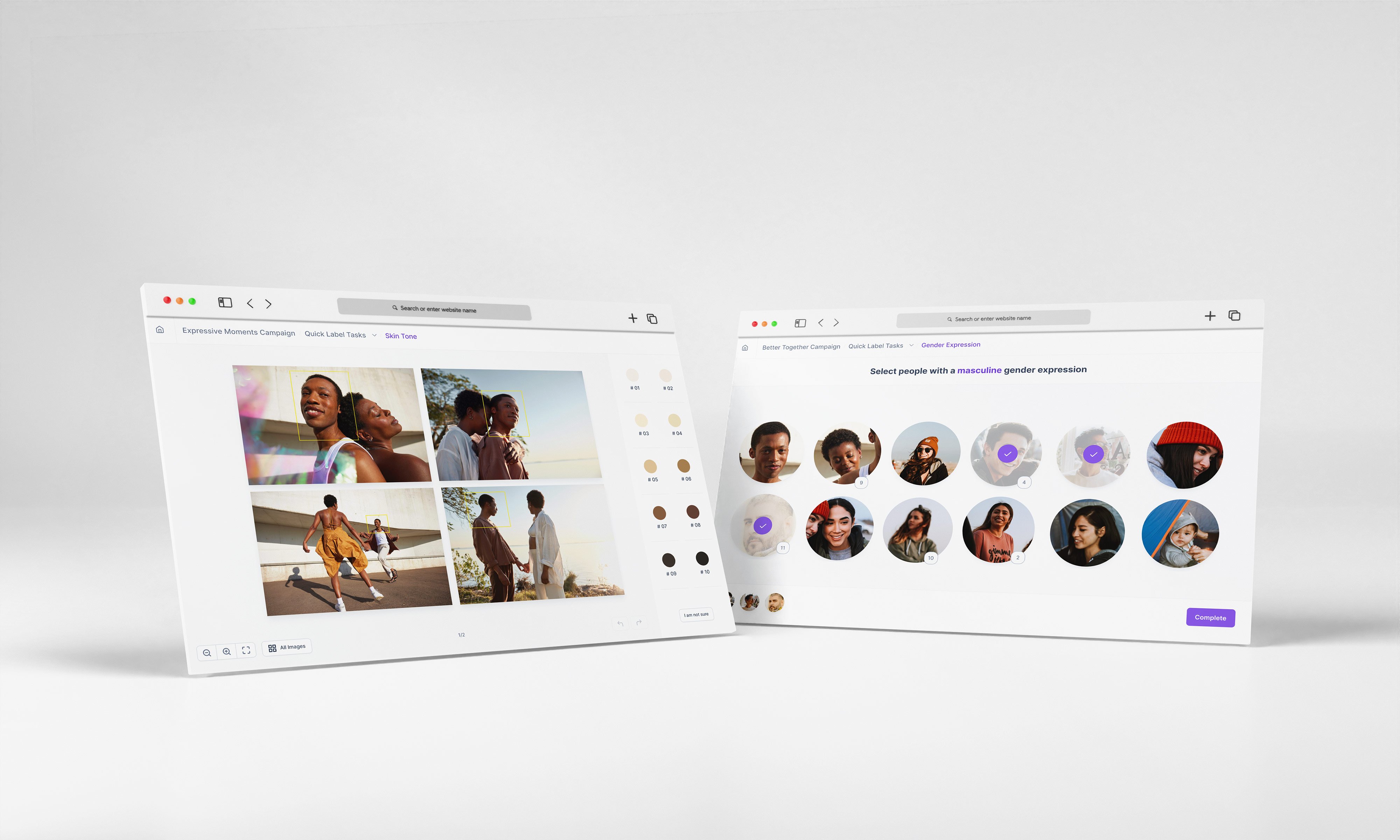

In this context, an annotation tool involves software that tags content with relevant attributes in order to sort them into groups. By creating a new kind of annotation product, Alltold could help companies understand how well people of diverse identity groups are represented across their content. This way, companies can establish a data-driven representation baseline, as well as track progress towards increasing representation to align with their goals.

Our process

To guide Alltold into product development, we set out on a design research sprint. Across three weeks of workshops, hypotheses, and testing, we explored opportunities to build a superior workflow for an engaging, effective annotation product. By the end, we’d compiled recommendations and actionable next steps to help Alltold define their product along with its goals, challenges, and requirements.

Here’s a glimpse into our process, our guiding principles, and a few things we learned along the way.

1) AI can be used to enhance human workflows, not replace them.

As we generated opportunities across the experience map, we focused on building a healthy human-AI relationship. When AI isn��’t there to take over, but rather to help make the job easier, we show respect for the human in the process.

Instead of relying on AI to provide all content tags, we can use it to provide initial tags that are then verified by the human annotator, thus lightening the workload and maximizing efficiency. Layering automated and human input also improves accuracy – a crucial point for Alltold’s annotation product. For a strong, collaborative workflow, human annotators need to be able to trust in the precision of AI-powered annotation.

We realized that a better motif for AI tooling may be akin to a non-player character that annotators can recruit for support in specific tasks. This maintains the human annotator’s sense of ownership and control over their work, which helps them stay engaged.

2) AI can streamline and simplify repetitive tasks to improve efficiency.

We focused our sprint on building a workflow that was faster, more accurate, and more engaging than current solutions. Historically, the workflow for annotating media was a rigid process of repetitively tagging content piece by piece.

We wanted to innovate from this established workflow, simplifying the task to help annotators keep focus. Are some annotation tasks simple enough for people to batch label across a range of content at once?

To test our hypothesis, we explored a solution where annotators wouldn’t have to manually tag the same subject in each instance that person appears, rather we could leverage AI to build a profile for them. This way, the subject would be automatically tagged when their appearance aligns with their profile. Profiles can be used to catalog, filter, annotate, and verify, while being scalable to meet diverse user needs, from focused tasks to review of vast data sets.

Profiles also unlock the ability to “batch label,��” boosting efficiency and streamlining the process to feel much less tedious. By reducing the repetitive nature of annotation and creating moments of ease, we not only help annotators move forward more quickly, but also build a more enjoyable and motivating process. Streamlining the process allows people to flow through the task without feeling overwhelmed, especially when working through large batches of media.

3) AI-powered tools should serve and support people’s diverse strengths and styles of working.

During our workshops, we learned that different annotators have unique practices and processes that help them perform best. So, the annotation product needed to be flexible enough to accommodate diverse annotator backgrounds and experience. It should provide guidance, personalization, and scalability to allow different workflows to co-exist without sacrificing simplicity. This way, annotators feel supported and acknowledged as a vital part of the process.

In this vein, our product vision for Alltold emphasizes onboarding for annotators, to soften the jump from old annotation tool patterns. There’s always a transition period when using a new digital product, and many annotators will bring established ways of working that will require some adjustment. An empathetically designed digital product should come with a plan to support users with training, clear direction, and descriptive explanatory copy throughout the product itself.

Set up for success

We loved having the opportunity to help Alltold turn their bright idea into a clearly defined product experience. As we zoomed in and out on the annotation space, we never lost focus on the product’s greater mission: to increase the equitable representation of diverse identities in media.

Throughout the process, we maintained fluid dialogue with the client to keep this mission top of mind. At the end of the process, we illuminated a clear pathway forward for Alltold to build a digital product that has potential to make a big impact – not just on the realm of AI-based product experiences, but on the lived human experience. And that’s always our goal.

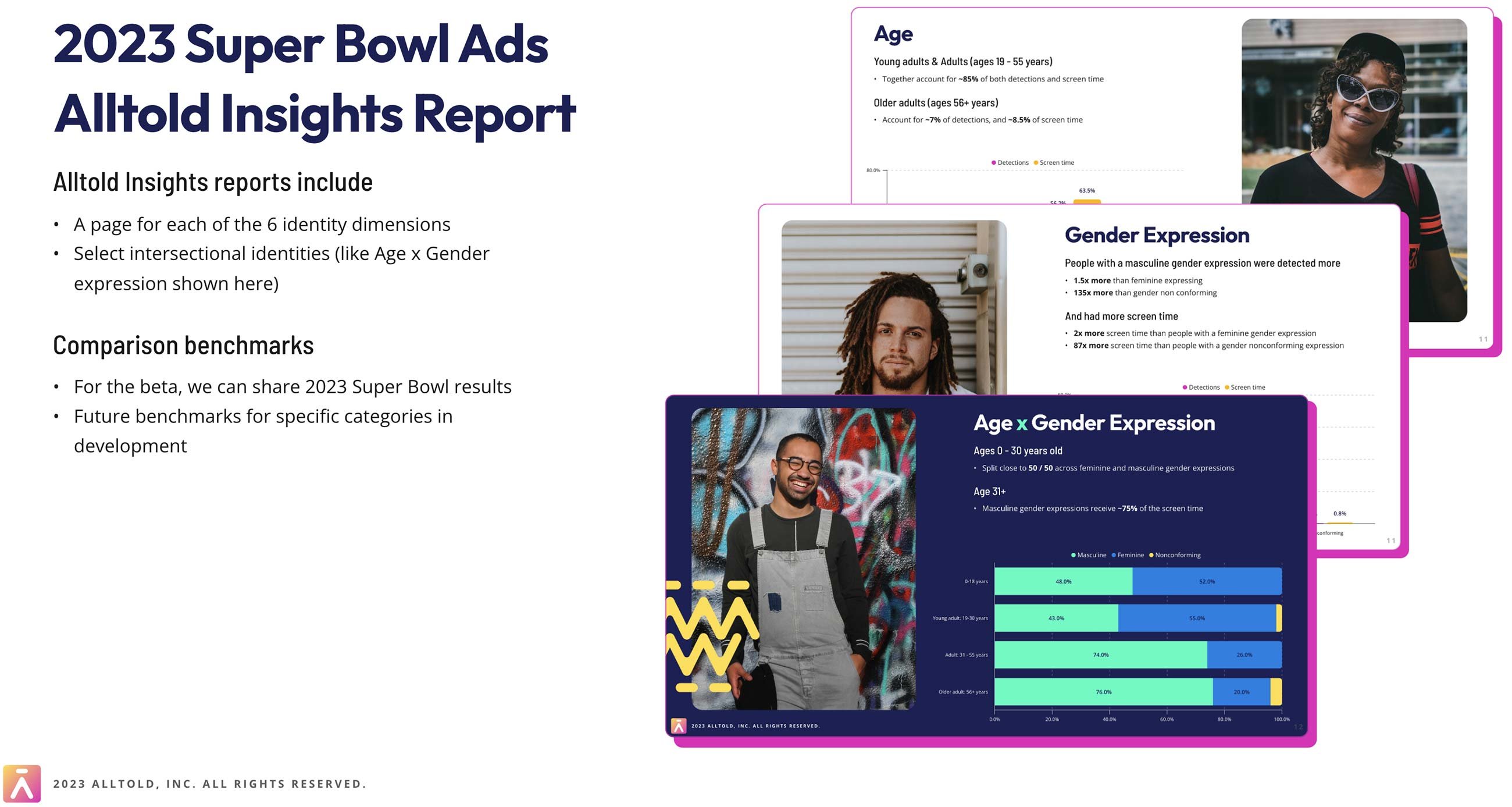

Alltold recently launched their first product, Alltold Insights for Video Ads, which they used to run an analysis of the 2023 Super Bowl ads. If you work in Marketing - we want to share that they're running a Beta program through the end of 2023 where customers can send them a set of ads, and receive a custom representation report for their ads. For more information and to join the beta, check out www.alltold.ai.